After the AI DOC

Current AI safety approaches focus on aligning models and regulating platforms. While necessary, these strategies do not redistribute power or address the structural concentration of influence over human cognition. This article argues that a critical layer is missing: user-controlled, interface-level governance operating above the webpage. It proposes a portable trust layer composed of primitives such as contextual overlays, cross-site provenance tracking, community-driven verification, visible AI agent labeling, and durable “monuments” for evidence. Together, these enable distributed verification and coordination independent of platform control. Without such infrastructure, AI governance remains vertically integrated, leaving users dependent on centralized actors to detect and mitigate harm after it has already spread. What has changed is that this layer is now practically buildable. The tools, interfaces, and coordination mechanisms required to prototype it are accessible, and early design choices are not yet fixed. The article concludes that if AI poses systemic risks to cognitive autonomy, then safety must include architectural counter-power. The shape of this emerging trust layer will be determined by those who choose to build it.Blog post description.

2/11/20267 min read

AI Safety Cannot Be Centralized:

Why the Internet Needs a Human-Centered Trust Layer

The Center for Humane Technology has done important work diagnosing the dangers of AI-driven manipulation, synthetic media, and cognitive capture. The framing of AI as a threat to democratic cognition is not only compelling but also accurate.

But there is a structural gap in the proposed solution space.

Most current AI safety proposals, including those advanced by CHT, operate at two layers:

The model layer – alignment, guardrails, testing.

The regulatory layer – liability, disclosure mandates, platform accountability.

Both are necessary.

Neither redistributes power.

If, however, one of the core risks to democracy identified is the large-scale capture or manipulation of human cognition, then solutions that leave governance inside centralized corporate or regulatory institutions risk stabilizing the very architecture that enables that concentration of influence.

Liability constrains behavior. Guardrails civilize deployment. Regulation moderates excess.

But none of these create architectural counter-power, meaning structural mechanisms that distribute enforcement and verification capacity beyond centralized platforms

There is a missing lever: user-controlled, decentralized interface-layer governance. We could certainly use governance mechanisms operating at the browser or user interface level rather than inside models or platforms.

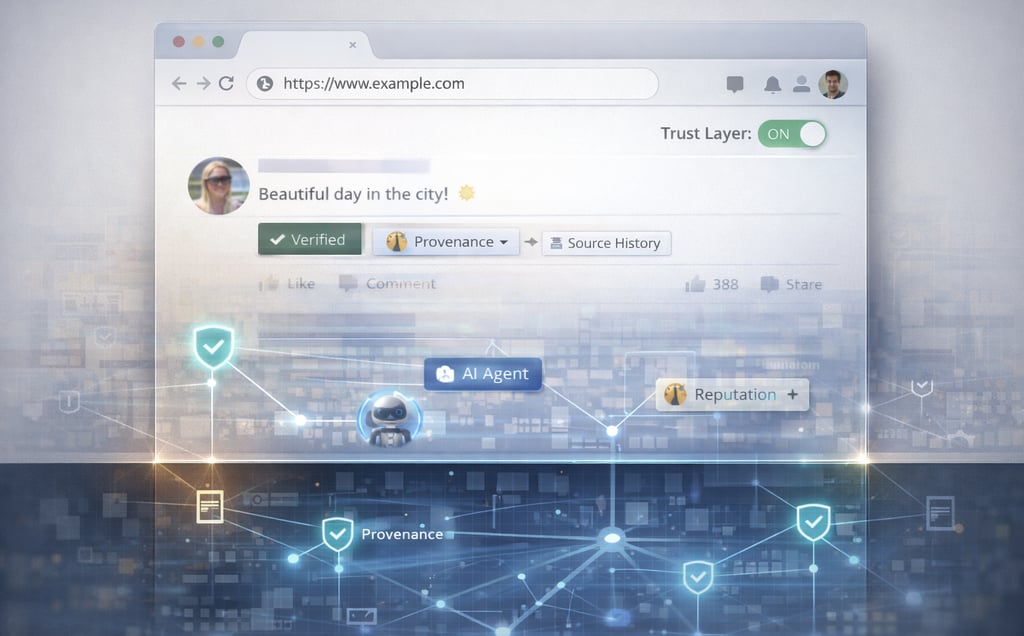

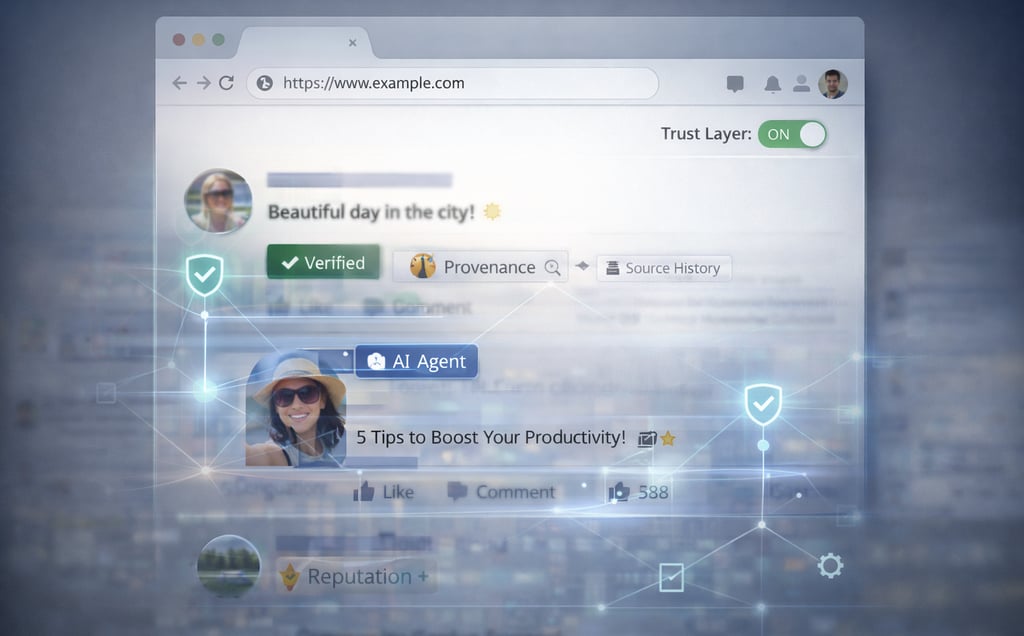

A portable trust layer above the webpage, capable of immediately flagging a viral AI impersonation scam or deepfake election clip across sites.

And for the first time, this lever is accessible.

The tools to build interface-layer systems are no longer confined to large institutions.

Open-source frameworks, browser extensions, AI-assisted coding, and low-cost compute have made it possible for small teams, and even individuals, to prototype and deploy new interface primitives.

This is not a distant research agenda.

This is a buildable layer. Now.

And for the first time, this lever is accessible.

The tools to build interface-layer systems are no longer confined to large institutions.

Open-source frameworks, browser extensions, AI-assisted coding, and low-cost compute have made it possible for small teams, and even individuals, to prototype and deploy new interface primitives.

This is not a distant research agenda.

This is a buildable layer. Now.

A portable trust layer above the webpage, capable of immediately flagging a viral AI impersonation scam or deepfake election clip across sites.

One that enables:

Cross-site provenance tracking – for example, clicking a small, familiar-looking icon next to a viral video and instantly seeing where it first appeared, how it spread, and whether trusted communities have verified or challenged it, all within a layout that looks and feels like the web you are already accustomed to.

Community-driven verification – imagine a Wikipedia-style layer that appears when a sensational health claim is trending, showing quick community notes like “Doctors in this field dispute this” or “Satire account,” without leaving the page you’re on.

One more primitive completes this: digital monuments. A monument is a permanent evidentiary object where claims, sources, contradictions, and outcomes can accumulate over time. Where overlays make verification visible in the moment, monuments make verification durable – a persistent boundary object that journalists, communities, researchers, and AI systems can all reference without losing provenance.

Visible AI agent labeling – for example, when interacting with a customer support bot, social media account, or even a persuasive political thread, a small clear badge indicates “AI-generated” or “Autonomous agent,” so you always know whether you’re engaging with a person or software.

Distributed reputation graphs – think of it like a portable “trusted by” signal that travels with creators and sources across platforms, so if a science communicator has built credibility over years, that trust signal follows them whether you see them on YouTube, X, or a new platform.

Contextual overlays independent of platform control – similar to a browser extension that lets you toggle on community-curated context while shopping, reading news, or watching a clip, adding lightweight annotations and trust signals without changing the familiar look and feel of the web you already use.

Without such a layer, AI governance remains vertically integrated, where the same entities build, deploy, and police the systems. The recent emergence of autonomous, AI-mediated coordination spaces such as Moltbook illustrates how quickly agent-driven ecosystems can form outside traditional platform guardrails. Even without malicious intent, semi-autonomous agents interacting, amplifying, and responding across networks can generate influence cascades that no single model safeguard or regulatory mandate can contain in real time. Without user-visible agent labeling, cross-site coordination detection, and portable trust overlays, these systems scale faster than centralized enforcement can respond.

The bridge is the smallest unit of this infrastructure: a typed connection between a fragment (clip, quote, document) and a claim or event. Bridges can be proposed as soft links and later inscribed into the permanent record. This keeps participation open while letting the market and verification layer filter what becomes durable.

Overlays show context now.

Monuments preserve evidence forever.

Bridges connect the two.

The same firms that mediate cognition become the locus of safety enforcement. Even well-intentioned regulation may harden incumbency by increasing compliance costs and reinforcing central chokepoints. History shows that liability and compliance regimes, while essential, often entrench dominant actors because only the largest firms can absorb sustained regulatory overhead at scale.

More than a critique of motives, this is a critique of scope.

When influential organizations frame AI safety exclusively through model and regulatory levers, critical harm scenarios remain structurally unaddressed. Consider a coordinated deepfake election clip that spreads across multiple platforms within hours. Even if frontier models are well-aligned and platforms comply with disclosure mandates, there is no portable, cross-site mechanism for users to see provenance, view independent verification, or access community-driven contextual overlays in real time.

Without an interface-layer trust infrastructure, harm mitigation depends entirely on the same centralized actors to detect, label, and throttle content after it has already shaped perception. Thus, their frame constrains the solution space, limiting which architectural interventions are treated as legitimate or worthy of investment.

That narrowing matters.

Because ossification does not happen through malice. It happens through path dependence – where early design choices harden into default structures that become difficult to reverse.

If the dominant safety narrative excludes interface-level civic infrastructure – shared verification and trust systems that operate across platforms, then corporate mediation of trust becomes normalized before alternatives mature. Alignment without reforming how trust, verification, and enforcement power are distributed across the web risks becoming containment without democratization. In other words, making models safer without shifting who controls the mediation of information may limit harm while leaving cognitive power concentrated in the same centralized actors.

The question is not whether regulation is necessary.

It is whether regulation without architectural counter-power is sufficient.

If AI poses a civilizational risk to cognitive autonomy, then safety must include the redistribution of trust and verification capacity to users and communities themselves.

Why is this lever largely absent from mainstream AI safety agendas? Some may argue that interface-layer governance is too complex, too fragmented, or too premature compared to model testing and regulatory guardrails. But complexity is not a justification for omission. If AI-mediated cognition is becoming infrastructural, then counter-infrastructure cannot be treated as optional or deferred. The difficulty of implementation is precisely why serious investment and institutional attention are required now, before centralized mediation hardens into default architecture.

If the diagnosis is existential, incremental constraint alone will not suffice. Structural problems require structural counter-power.

This is an invitation to widen the frame – not a rejection of the work already underway.

The stakes demand architectural imagination equal to the scale of the problem.

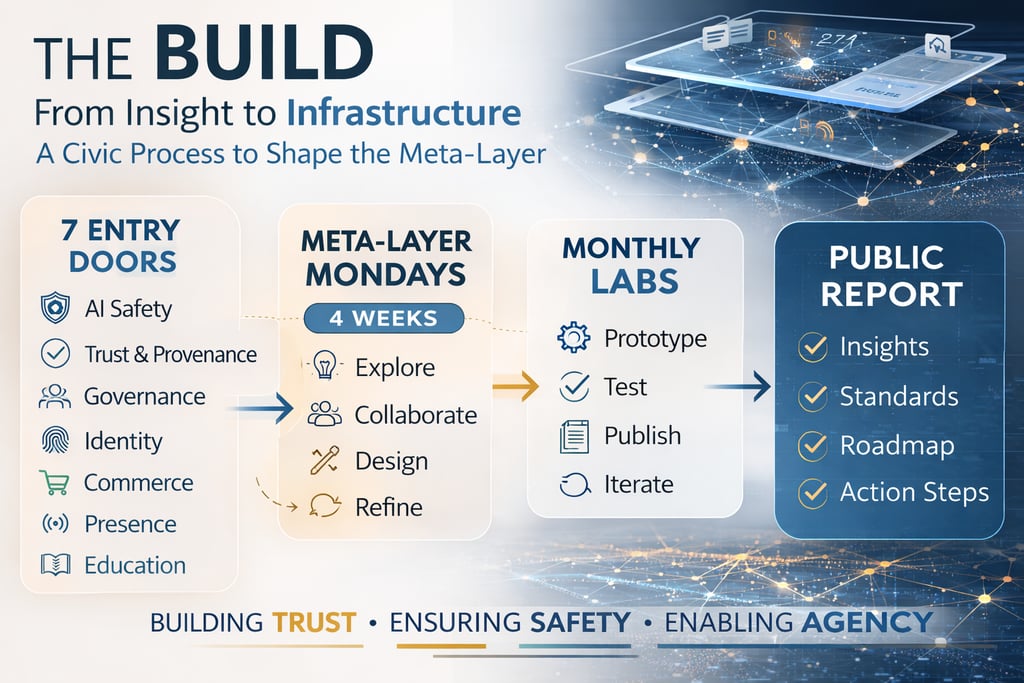

A structured civic build cycle beginning with seven consecutive daily labs – seven entry doors, each complete in itself, where you only need to attend one to meaningfully participate – followed by weekly Meta-Layer Mondays at 11:30a PST for four weeks, and then monthly Meta-Layer Mondays at 11:30a PST through a 12-month arc culminating in a public report-back.

An Invitation to Build the Next Layer

If AI safety discourse remains focused on constraining models and regulating platforms, then the responsibility for architectural counter-power shifts elsewhere.

Not to institutions alone, but to those capable of building and testing alternative layers in practice.

What has changed, and why this matters now, is that the cost of doing so has collapsed.

The primitives described here do not require centralized deployment or large-scale institutional coordination to begin. They can be prototyped incrementally, at the interface layer, using existing tools, extensions, and open systems.

This creates a different kind of opening: a construction window.

A period in which the architecture of trust, verification, and coordination on the web has not yet been fully determined.

That window is unlikely to remain open indefinitely.

As with prior phases of the internet, early patterns tend to harden into defaults.

If interface-layer trust infrastructure does not emerge during this phase, centralized mediation is likely to become further entrenched, not because it is optimal, but because it is available.

Under these conditions, the question is less whether such a layer is desirable, and more whether it will be built.

And if so, by whom, and with what assumptions.

The proposal outlined here is one possible direction: a layered approach to trust and verification, operating above the webpage and independent of any single platform.

But more important than any specific implementation is the recognition that this layer is now buildable.

That the barrier is no longer primarily technical.

And that the shape of this layer will be determined by those who choose to participate in constructing it.

May the evidence connect.

May the universe coalesce.

May shared reality emerge.

Go meta.

The Build: Constructing Interface-Layer Governance in 12 Months

If this resonates, we’re hosting a series of post–AI DOC Zoom conversations in the days following the film’s release. These are small-group sessions to reflect on what stood out, surface concerns, and explore how a layered web approach might actually change the trajectory we’re on. If you’re looking for a place to think this through with others, you’re invited to join us.

Find out about our upcoming screenings at: lu.ma/ai-doc

Contribute | Build | Participate

Join us to shape the future of the web.

Connect

© 2025. The Meta-Layer Initiative. All rights reserved.

Ecosystem

Bridgit DAO

Gov Hub

Partners